See the latest book content here.

1 Supervised Machine Learning

Learning outcomes from this chapter:

- Forms of data: image data, tabular data, sequence data.

- The two forms of supervised learning: classification and regression.

- Hands on with basic classifiers: an ad-hoc classifier, least squares classifciation.

- Quantification of classification performance: accuracy, recall, and precision, F1 Score.

- Working with training, development and test sets.

- The concept of tuning hyper-parameters.

- The bias-variance tradeoff.

Source code for this unit is here.

1.1 A sea of data

Data is available in a variety of forms. This includes images, voice, text, tabular data, graphical data, and other forms.

In the typical case we consider a data point as a vector \(x\) of length \(d\). Each coordinate \(x_1,\ldots,x_d\) of the vector is a feature. As there are multiple data points, say \(n\), we denote the data points as \(x^{(1)},\ldots,x^{(n)}\). We can then also consider the data as a \(d \times n\) matrix \(X = [x^{(1)}~~\cdots~~x^{(n)}]\). This is a matrix with \(X_{ij} = x^{(j)}_i\) for \(i=1,\ldots, d\) and \(j=1,\ldots,n\). Sometimes the transpose of the matrix is used. This transposed form is especially convenient if thinking of the data in tabular form such as a spreadsheet or data frame (each row is an observation and each column is a feature/variable).

Note that an alternative to the data being of the form \(X = [x^{(1)}~~\cdots~~x^{(n)}]\) is sequence data where \(X = x^{(1)}, x^{(2)}, x^{(3)}, \ldots\) and the data sequence can essentially continue without bound. Such sequence data is typical for text, voice, or movies. We handle such cases in Unit 8 – Sequence Models.

1.1.1 Forms of the datapoint \(x\)

A data point \(x\) can be quite a complicated object, such as an image, or even a movie. In such a case, we can stil vectorize the data to represent it in a vector. The exact interpretation of the data varies. Here are some examples:

Voice recording: Here a simple representation is the amplitude of the recording at regular intervals, say every \(0.000125\) seconds (every \(1.25\) micro seconds) when recording at \(8,000\) samples per second. The amplitude can be a positive or negative number, sometimes defined over a finite range (e.g. only one of \(256\) values if each sample is a single byte). Hence for example a voice recording of an English sentence of \(5\) seconds will be a vector with \(d = 40,000\) entries (features).

A monochrome image: Here consider an image of \(d_1\) by \(d_2\) pixels with each pixel signifying the intensity e.g. \(0\) is black and \(255\) (or some other maximal value) is white. We can consider the image as a \(d_1 \times d_2\) matrix \(\tilde{x}\) and the vector \(x\) of length \(d = d_1 \cdot d_2\) as a vectorized formed of the matrix. That is if we consider column-major vectorization then for any \(k=1,\ldots,d\), we set \(\ell = ((k-1) \mod d_1) + 1\) and \[ x_k = x_{\ell,\frac{k-\ell}{d_1} + 1}. \] Similarly with row-major vectorization we set \(\ell = ((k-1) \mod d_2) + 1\) and \[ x_k = x_{\frac{k-\ell}{d_2} + 1,\ell}. \] As an example consider a \(2000 \times 1000\) (portrait) image. In this case \(d=2\times 10^6\) features (pixels).

A color image: Here each pixel is not just an intensity but rather an RGB (Red, Green, Blue) \(3\)-tuple. One way to represent this image is in the \(3\)-tensor \(\tilde{x}\) which is \(d_1\times d_2 \times 3\) dimensional. Again, similarly to the case of a monochrome image it is possible to vectorize the image to a \(d\) dimensional vector \(x\) with \(d = 3 \cdot d_1 \cdot d_2\). An image of the same dimensions as before has \(d = 8 \times 10^6\) features. Note that if considering say \(2^{16}\) values for each color component then then each feature is represented by \(2\) bytes and the memory size of \(x\) is \(16 \times 10^6\) bytes which is roughly \(16\) Megabytes (a Megabyte is typically considered to be \(2^{20}\) bytes which is only approximately \(10^6\)). In practice, images are often stored in a much more compressed format - although for deep learning purpuses they are often expanded to this type of bitmap format.

A (silent) color movie: Here there is another dimension \(d_3\) which is the number of frames in the movie and each frame is an image. Hence the movie \(\tilde{x}\) is a \(4\)-tensor of dimensions \(d_1\times d_2 \times 3 \times d_4\) . It can also (in principle) be vectorized into a vector \(x\). To get a feel for the dimension (number of features) in \(x\) assume it is a high quality movie as before with each image (frame) having \(6 \times 10^6\) features. If the movie has 30 frames per second and spans \(5\) minutes then it is composed of \(30\times 5 \times 60 = 9000\) images, so the total number of features is \(72 \times 10^9\). This would take about \(144\) Gigabytes in such raw form. Note that in practice movies are stored in compressed formats.

A text corpus: Text written in ASCII, Unicode, or other means is collection of characters. One way to consider text is as sequence data (similarly this can be done for voice recordings and movies). An alternative way is to summarize the text into a word frequency histogram where a collection of words in the dictionary, say \(d = 40,000\) words are mapped to the \(40,000\) entries of the vector \(x\). Then \(x_i\) is the frequency of how many times the word appeared in the text. Wit such a representation, it is quite clear that for non-huge texts \(x\) is a sparse vector.

Hetorgenous datasets: In many cases the features for a certain observation/data-point/individual are very hetorgenous. This is for the example the case coming from survey data, or other personal data where each feature (or subset of features) is completely different from the rest. This is the type of information appearing in a data frame. Features can be categorical (in which case we can encode them numerically) or numerical.

An alternative phrase common in statistics is the response variable. The labels: When dealing with supervised learning, each data point also has a label (or response variable). This is denoted as \(y_1,\dots,y_n\). In classification problems there is a finite set of values which the label can take, which we consider as \(\{1,\ldots,K\}\) without loss of generality. In regression problems the labels are real-valued.

1.1.2 Popular (example) datasets

Here are a few popular datasets:

- Boston Housing Prices Dataset

- Iris Dataset

- [Wisconsin Breast Cancer Dataset](https://archive.ics.uci.edu/ml/datasets/Breast+Cancer+Wisconsin+(Diagnostic)

- MNIST

- Fashion MNIST

- CIFAR-10

- ImageNet

- YouTube-8M

- Twitter Sentiment Analysis

- 20 Newsgroups

- A sound vocabulary and dataset

And here is a nice video describing a few of these:

See also:

1.1.3 The MNIST dataset

One of the most basic machine learning datasets is MNIST. In this case, each \(x^{(i)}\) is a \(28\times 28\) black and white image of a digit, and when vectorized is a \(d=784\) long vector. Each label \(y_i\) is an element from \(\{0,1,2,\ldots,9\}\) indicating the real meaning of the digit. The dataset is broken into a training set involving \(60,000\) images, and a testing set involving an additional \(10,000\) images. Hence in total \(n=70,000\).

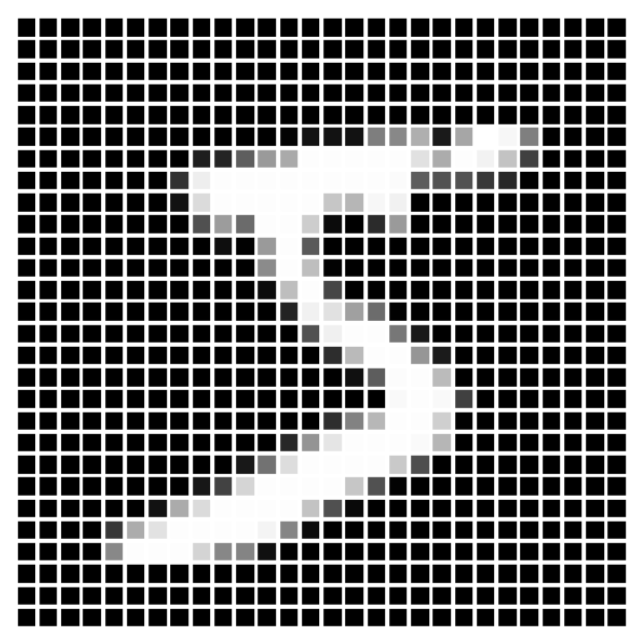

Figure 1.1: The first image in the MNIST training set. Clearly \(y_1 = 5\) for this image.

Figure 1.1: The first image in the MNIST training set. Clearly \(y_1 = 5\) for this image.

We use this example to also deviate momentarily from supervised learning for some exploratory data anslysis (EDA) as well as a bit of unsupervised learning.

Here as an example is the first image. Pixels have values in the range \([0,1]\) with \(0.0\) being “fully off” (black in this representation) and 1.0 being “fully on”.

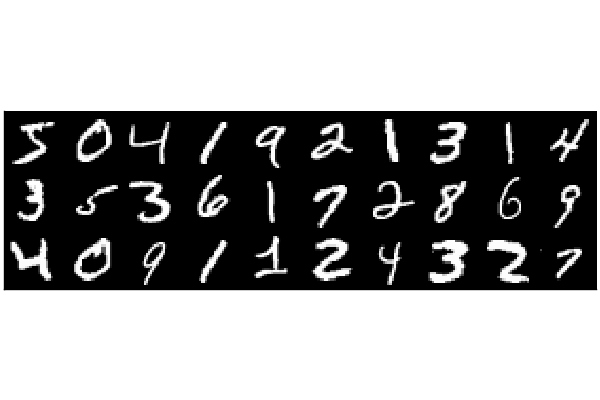

And here are the first 30 images

Figure 1.2: The first 30 MNIST images

Figure 1.2: The first 30 MNIST images

#Julia code

using Plots, Flux.Data.MNIST; pyplot()

imgs = MNIST.images()

heatmap(vcat(hcat(imgs[1:10]...),

hcat(imgs[11:20]...),

hcat(imgs[21:30]...)),ticks=false)The proportion of 0 pixels is about 80%, and within the non-zero pixels, the mean intensity is about \(0.68\). The proportion of pixels that are fully saturated (\(=1\)) is 0.00668. As presented below, label counts are approximately even among labels, hence this is a balanced dataset. We also plot density plots of the distribution of the number of pixels that are on, per digit.

![]() Figure 1.3: The distribution of the number of on-pixels per digit label

Figure 1.3: The distribution of the number of on-pixels per digit label

#Basic Exploratory Data Analysis (EDA) for MNIST with Julia

using Statistics, StatsPlots, Plots, Flux.Data.MNIST; pyplot()

imgs, labels = MNIST.images(), MNIST.labels()

x = hcat([vcat(float.(im)...) for im in imgs]...)

d, n = size(x)

@show (d,n)

onMeanIntensity = mean(filter((u)->u>0,x))

@show onMeanIntensity

prop0 = sum(x .== 0)/(d*n)

@show prop0

prop1 = sum(x .== 1)/(d*n)

@show prop1

print("Label counts: ", [sum(labels .== k) for k in 0:9])

p = plot()

for k in 0:9

onPixels = [ sum(x[:,i] .> 0) for i in (1:n)[labels .== k] ]

p = density!(onPixels, label = "Digit $(k)")

end

plot(p,xlabel="Number of non-zero pixels", ylabel = "Density")```

(d, n) = (784, 60000)

onMeanIntensity = 0.6833624860322157

prop0 = 0.8087977040816327

prop1 = 0.006681164965986395

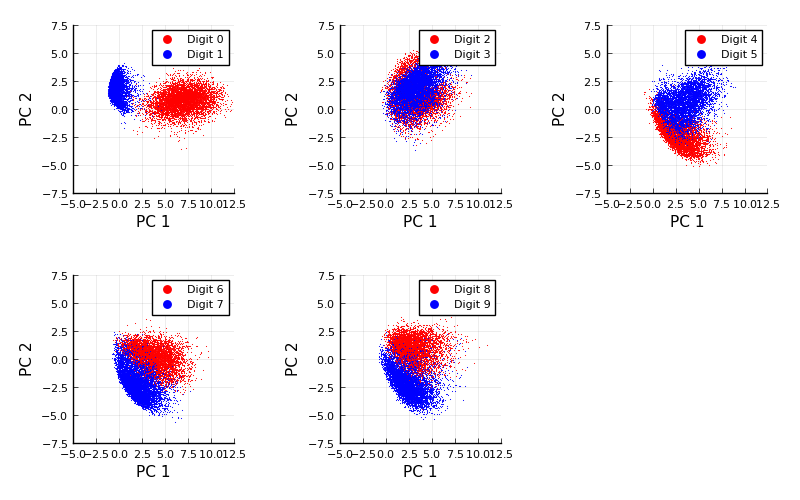

Label counts: [5923, 6742, 5958, 6131, 5842, 5421, 5918, 6265, 5851, 5949]We can also carry out Principal Component Analysis (PCA) for the whole dataset. We won’t cover PCA here, but this video may be useful.

When we carry out PCA and then plot each image based on the first two principle components, we get the following (this example is taken from here:

Figure 1.4: Basic Principal Component Analysis (PCA) for MNIST

Figure 1.4: Basic Principal Component Analysis (PCA) for MNIST

#Julia code

using MultivariateStats,LinearAlgebra,Flux.Data.MNIST,Measures,Plots

pyplot()

imgs, labels = MNIST.images(), MNIST.labels()

x = hcat([vcat(float.(im)...) for im in imgs]...)

pca = fit(PCA, x; maxoutdim=2)

M = projection(pca)

function compareDigits(dA,dB)

imA, imB = imgs[labels .== dA], imgs[labels .== dB]

xA = hcat([vcat(float.(im)...) for im in imA]...)

xB = hcat([vcat(float.(im)...) for im in imB]...)

zA, zB = M'*xA, M'*xB

default(ms=0.8, msw=0, xlims=(-5,12.5), ylims=(-7.5,7.5),

legend = :topright, xlabel="PC 1", ylabel="PC 2")

scatter(zA[1,:],zA[2,:], c=:red, label="Digit $(dA)")

scatter!(zB[1,:],zB[2,:], c=:blue, label="Digit $(dB)")

end

plots = []

for k in 1:5

push!(plots,compareDigits(2k-2,2k-1))

end

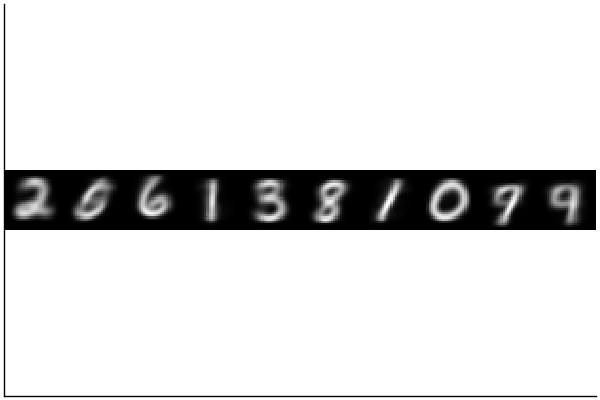

plot(plots...,size = (800, 500), margin = 5mm)We can also carry out k-means clusterming for the whole dataset with \(k=10\). When we do this we get centroids of the 10 clusters that resemble smoothed digits. This type of clustering algorithm works by iterating two steps: (1) Finding centers (sometimes called centroides) of the currently specified clusters. And (2) assigning the clusters based on the current centers. It is very simple. Here is a video that shows it for \(n=300\) with \(d=2\) and a specification of four clusters:

And here it is done for the MNIST dataset with the number of clusters naturally specified as \(10\).

Figure 1.5: MNIST clustering - the centroids

Figure 1.5: MNIST clustering - the centroids

#Julia code

using Clustering, Plots, Flux.Data.MNIST, Random; pyplot()

Random.seed!(0)

imgs, labels = MNIST.images(), MNIST.labels()

x = hcat([vcat(Float32.(im)...) for im in imgs]...)

clusterResult = kmeans(x,10)

heatmap(hcat([reshape(clusterResult.centers[:,k],28,28) for k in 1:10]...),

yflip=true,legend=false,aspectratio = 1,ticks=false,c=cgrad([:black, :white]))It is then interesting to look at the centers (centroids). None of them are any specific real image but they are rather the average image of each cluster.

1.2 Classification and Regression Problems

In supervised learning we wish to find a model \(\hat{f}(x)\) which takes in a data point \(x\) and computes an estimated label. In case of regression, labels are continuous variables and we can view the model as \(\hat{f}: {\mathbb R}^d \to {\mathbb R}\). In case of classification the labels fall in some finite set \(\{1,\ldots,K\}\) and we can view the model as \(\hat{f}: {\mathbb R}^d \to \{1,\ldots,K\}\).

1.2.1 Classification

Training of a supervised learning classification algorithm is the process of considering the data to produce a classifier \(\hat{f}\). Common algorithms include the support vector machine (SVM), random forest, and naive Bayes estimation. However our focus in this course is almost entirely on deep learning of which the perceptron, logistic regression, and softmax regression are basic examples, and convolutional deep neural networks are more involved examples.

In the case of a dataset like MNIST, a classifier \(\hat{f}\), is a function from the input image (vector) in \({\mathbb R}^d\) to the set of labels where \(K=10\) and the actual meaning of label \(k\) is the digit \(k-1\). Since \(K>2\) we say that such a classification problem is a multi-class problem, but in cases where \(K=2\) the problem is a binary classification problem. One may consider for example a relabeling of the labels in MNIST where every digit that is not the \(1\)-digit is labeled as \(-1\) (negative) and all the digits that are \(1\) digits are labeled as \(+1\) (positive). Such a binary classification would aim to find a classifier that distinguishes between digits that are not \(1\) and digits that are \(1\).

Note that often, we can use a collection of binary classifiers to create a multi-class classifier. For example, assume we had a binary classifier \(\hat{f}_i\) for each digit \(i\) (indicating if \(x\) is positive meaning it is from digit \(i\) or negative meaning it is not from digit \(i\)). Assume further that when we classify we actually get a measure of the certainty of the sample being positive or negative, meaning that if that measure is a large positive value we have high certainty it is positive, if it is a small positive value we have low certainty, and similarly with negative values. Denote this measure via \(\hat{f}^c_i: {\mathbb R}^d \to {\mathbb R}\). We can then create a multi-class classifier via, \[ \hat{f}(x) = argmax_{i=1,\ldots,K} \hat{f}_i^c(x). \]

This type of strategy is called the one-vs-rest (or one-vs-all) strategy as it splits a multi-class classification into one binary classification problem per class and then chooses the most sensible class. Note that an alternative is called the one-vs-one strategy in which case for each digit \(i\) we would create classification problems to distinguish between all other digits. We can denote these classifiers by \(\hat{f}_{ij}\) for distinguishing between digits \(i\) and \(j\) (and note that \(\hat{f}_{ji}\) is the same classifier). Then there are a total of \({K \choose 2} = K(K-1)/2\) such classifiers. If similarly we have certainty measures \(\hat{f}^c_{ij}\) then the overall multiclass classifier can be constructed via \[ \hat{f}(x) = argmax_{i=1,\ldots,K} \sum_{j \neq i} \hat{f}_{ij}^c(x). \]

1.2.2 Regression

In a case of a dataset like MNIST regression is not typically relevant. However assume hypothetically that there was another type of label associated with the dataset, indicating the duration it took to hand write each digit (say measured in milliseconds). We would still denote these labels via \(y^{(1)},\ldots,y^{(n)}\) only this time they would be real valued. Now a model \(\hat{f}\) is not called a classifier but rather predictor as it takes an image \(x\) and indicates an estimate \(\hat{f}(x)\) of the duration of time it took to write the label.

1.2.3 Applications of classification

Some of the biggest successes of Deep Learning in the past decade have been with regards to image classification. For example, lets try to use a famous pretrained network, VGG19, to classify this image,

Here we use the Metalhead.jl Julia package as a wrapper for the VGG19 pre-trained model. We’ll study more about neural network models such as this in the course, but for now think of the (pre-trained) neural network as a function \(\hat{f}: {\mathbb R}^{224 \times 224 \times 3} \to \{1,\ldots,1000\}\). This apple image isn’t exactly \(224 \times 224\) so a “wrapper” will translate the image into that format. VGG determines the image to be one of a thousand labels.

Figure 1.6: An image of an apple

Figure 1.6: An image of an apple

Figure 1.7: An image of a baby

Figure 1.7: An image of a baby

#Julia code

using Metalhead

#downloads about 0.5Gb of a pretrained neural network from the web

vgg = VGG19();

vgg.layers

#download an arbitrary image and try to classify it

download("https://deeplearningmath.org/data/images/appleFruit.jpg","appleFruit.jpg");

img = load("appleFruit.jpg");

classify(vgg,img)

#and again

download("https://deeplearningmath.org/data/images/baby.jpg","baby.jpg");

img = load("baby.jpg");

classify(vgg,img)"Granny Smith"

"diaper, nappy, napkin"

The output “Granny Smith” is correct in this case, but when we try for this image we got “diaper, nappy, napkin”, which is maybe somewhat close but not exactly correct.

1.2.4 Applications of regression

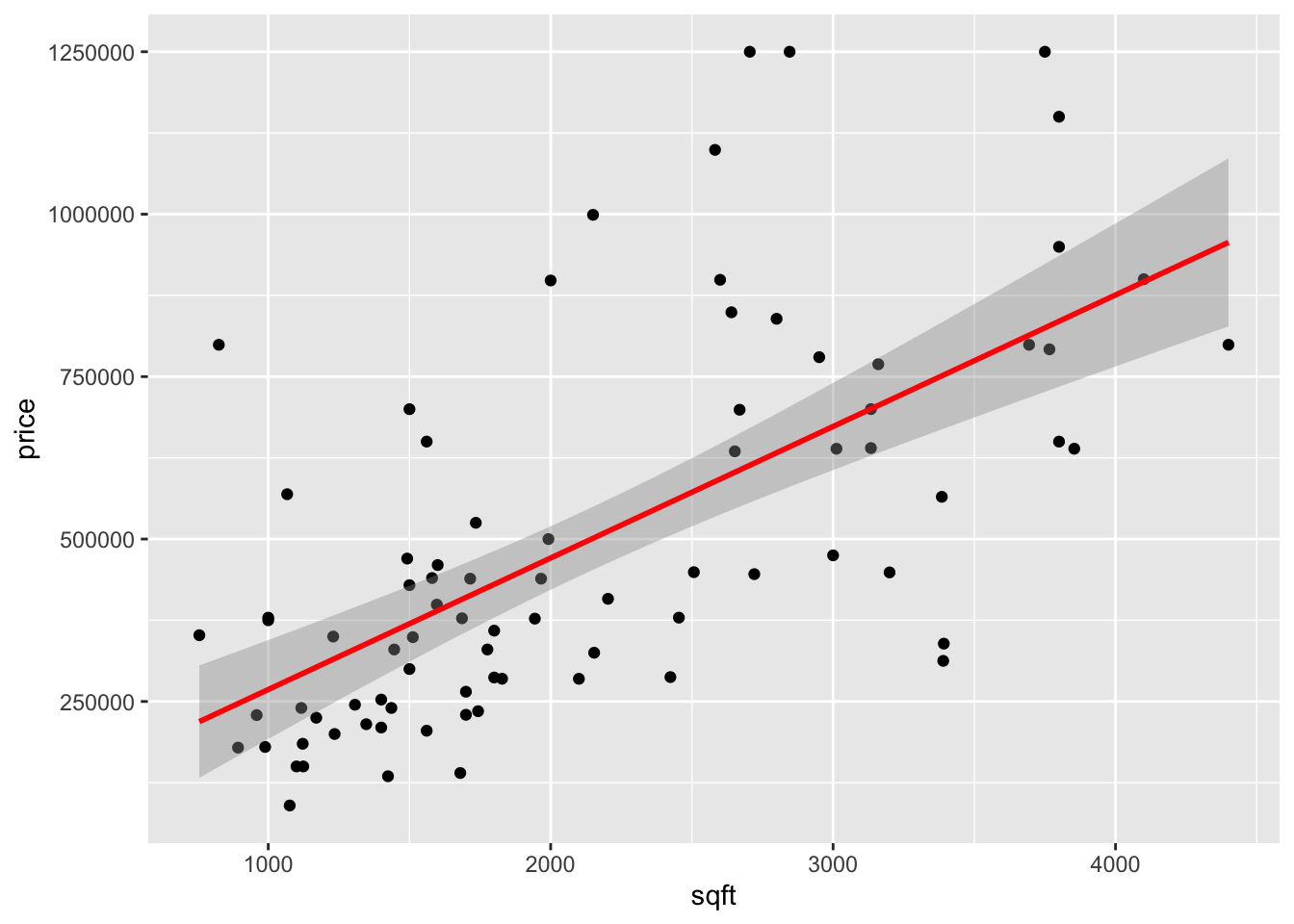

As a simple example consider a uni-variate feature \(X\) indicating the square footage (area) of a house, and \(Y\) as the selling price. In this case simple linear regression, \[ Y = \beta_0 + \beta_1 X + \epsilon, \] can work well to find a line of best fit and even provide confidence bands around the line if we are willing to make (standard) statistical assumptions about the noise component \(\epsilon\).

Figure 1.8: House price prediction

Figure 1.8: House price prediction

#R Code

library(ggplot2)

load("house_data.RData")

p1 <- ggplot(house, aes(sqft, price)) +

geom_point() + stat_smooth(method = "lm", col = "red") +

xlab("sqft") + ylab("price")+

theme(legend.position = 'bottom')

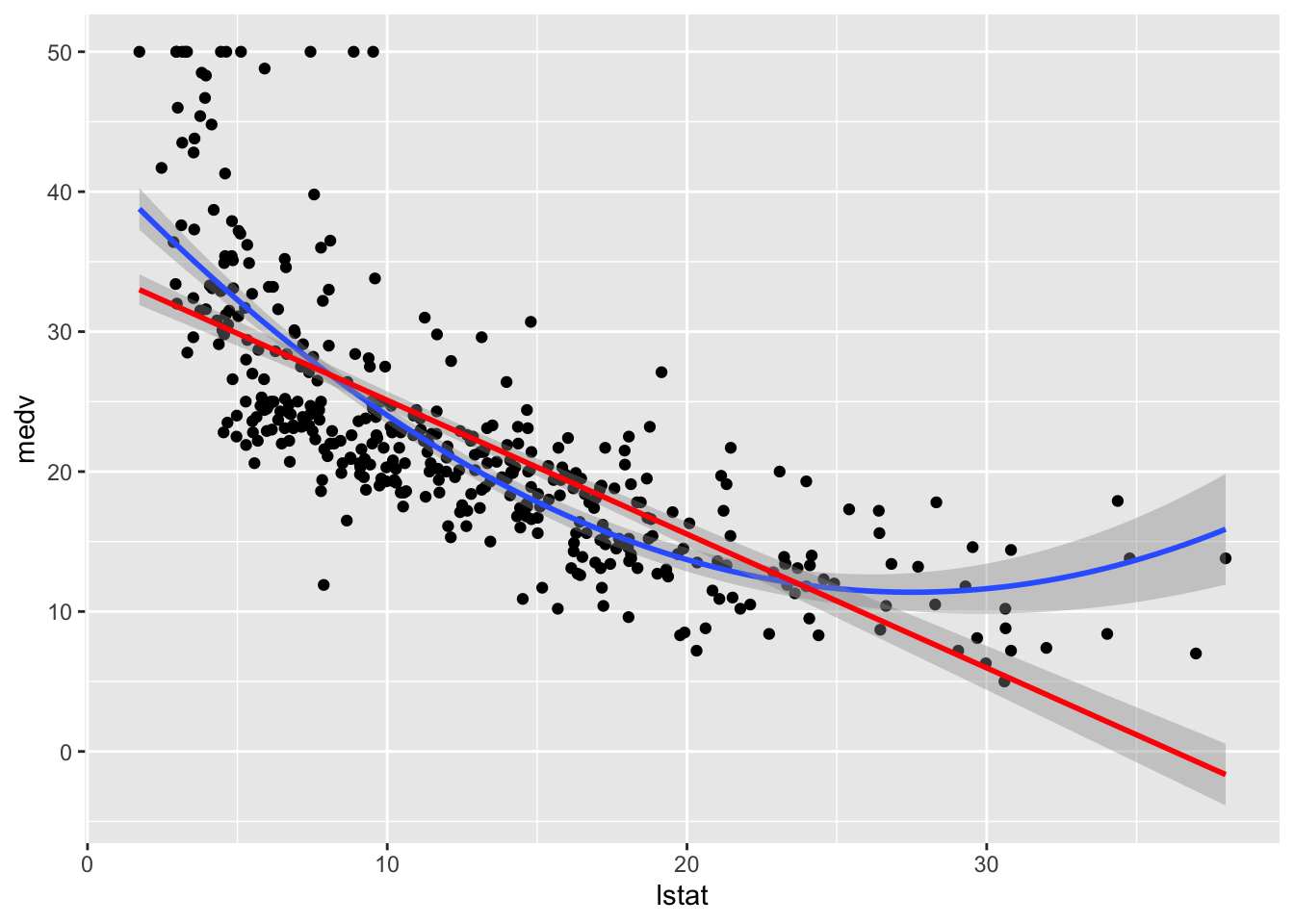

p1In other cases a simple linear model doesn’t work well and we can create additional features out of \(X\). For example a new feature is \(X^2\) and then we can fit the model, \[ Y = \beta_0 + \beta_1 X + \beta_2 X^2 + \epsilon. \]

# Load the data

library(MASS)

library(tidyverse)

library(caret)

data("Boston", package = "MASS")

# Split the data into training and test set

set.seed(123)

training.samples <- Boston$medv %>%

createDataPartition(p = 0.8, list = FALSE)

train.data <- Boston[training.samples, ]

test.data <- Boston[-training.samples, ]

model.lm <- lm(medv~lstat,data=train.data)

model.quad <- lm(medv~lstat+I(lstat^2),data=train.data)

RMSE.train <- sqrt(mean((train.data$medv-predict(model.lm))**2))

RMSE.test <- sqrt(mean((test.data$medv-predict(model.lm,newdata=test.data))**2))

#RMSE.train

#RMSE.test

RMSE.train.quad <- sqrt(mean((train.data$medv-predict(model.quad))**2))

RMSE.test.quad <- sqrt(mean((test.data$medv-predict(model.quad,newdata=test.data))**2))

#RMSE.train.quad

#RMSE.test.quad

tab <- data.frame("Model"=c("linear","quadratic"),"Train"=c(RMSE.train,RMSE.train.quad),"Test"=c(RMSE.test,RMSE.test.quad))

knitr::kable(tab,caption="Root Mean Squared Error",digits = 2)Table 1.1: Root Mean Squared Error

| Model | Train | Test |

|---|---|---|

| linear | 6.13 | 6.50 |

| quadratic | 5.48 | 5.63 |

Figure 1.9: House price prediction (in Boston Suburbs)

Figure 1.9: House price prediction (in Boston Suburbs)

ggplot(train.data, aes(lstat, medv) ) +

geom_point() +

stat_smooth(method = lm, formula = y ~ poly(x, 2, raw = TRUE))+

stat_smooth(method = lm, formula = y ~ x,col='red')These are classic applications of regression for which statistical practice and tradition is well suited. Model based fitting of linear models, generalized linear models, and other generalizations is now common practice and is a basic tool in the toolset of the statistican and data-scientist.

1.2.4.1 A second example

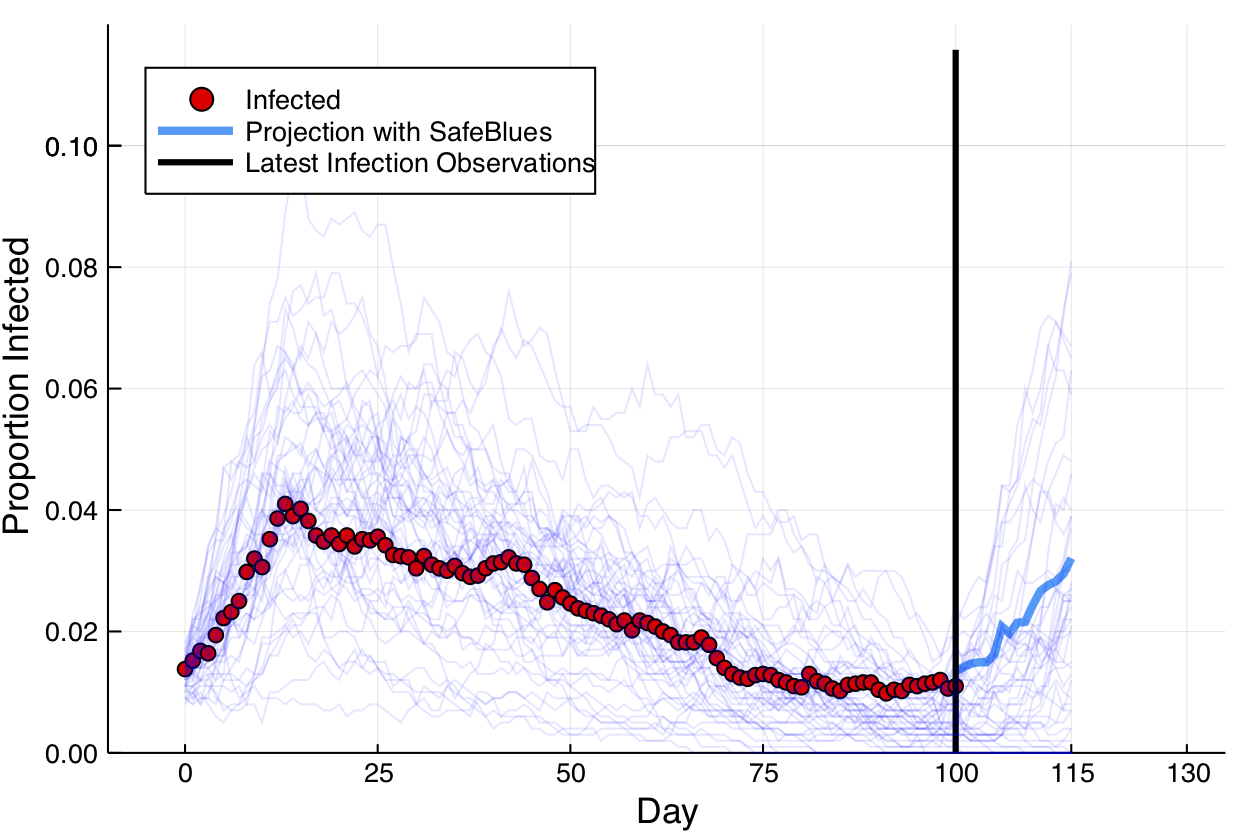

For this we consider the Safe Blues project. This experimental project deals with online measurements of a virtual safe epidemics. These virtual safe virus-like tokens are spread via cellular phones. The idea is then to exploit the fact that the virtual epidemic has some correlation to actual epidemic spread. In this simulation figure, a biological epidemic in red is observed during days 1-100. During that time, and also up to day 115, multiple Safe Blues (virtual) epidemics are observed. The virtual epidemics behave somewhat like the biological epidemic because the physical interactions that occur between people also (roughly) occur between their cellular phones.

The figure below presents a simulated (biological) epidemic in red, together with multiple simulated Safe Blues strands in thin blue.

Safe Blues strands in light blue behave somewhat like a real epidemic but can be measured in real-time.

A (relativly simple) neural network model relates the state of all \(d\) Safe Blues strands to the state of the biological virus. This model is trained at day 100, after 100 days of measurments of Safe Blues strands (\(n=100\)). Call the model \(\hat{f}\). Then during days \(101-115\) the on line Safe Blues strand measurments are used with \(\hat{f}\) to predict the level of the actual epidemic. This creates the blue curve.

This video explains a bit more:

1.3 The Train, Dev, Test workflow

The typical scenario in supervised learning is to use data to train a classifier on seen data and then use the classifier later on unseen data. For example with digit classification we can use the MNIST dataset to obtain a good classifier \(\hat{f}\) and then later, when presented with an unseen datapoint (image) \(\tilde{x}\) for which we don’t know the label \(\tilde{y}\), we can use \(\hat{f}(\tilde{x})\) as our best guess of the (never to be seen) label \(\tilde{y}\).

With such a process the underlying assumption is that the nature of the seen data is similar to the unseen data. Proper sampling is often needed for this.

The process of developing the classifier called learning and the usage of the classifier as part of a technological solutions such as apps or scientific modeling is sometimes called production. In more advanced scenarios active learning is used and the processes of learning and production are merged. We won’t deal much with active learning in this course.

The learning process is generally broken up into the acts of training, validation, and testing. In general, the training phase implies adjusting parameters of \(\hat{f}\) so that it predicts the data best. Further the validation phase implies adjusting hyper-parameters associated with \(\hat{f}\) or the training process itself. In practice, the training and validation phases are often mixed in the sense that we train, then validate, adjust parameters, train again, etc.

The testing phase implies simulating a production scenario using the seen data and checking how well the classifier or predictor works on that data. In general, testing should only be carried out once because going back and readjusting the classifier after testing will violate the validity of the test.

Before starting learning, we break the seen data into three different data chunks. The train set, the validation set, and the test set. The train set is the main dataset used to calibrate the learned parameters of \(\hat{f}\). The validation set is used to adjust hyper-parameters (including model selection) before repeating training with the train set. Finally, once a final model is chosen, it is evaluated on the test set. This application on the test set is used to given an indication of how well the classifier or predictor will work in production, on unseen data.

1.4 Example classifiers and performance measures

We now take a step back from the advanced neural network models and applications and explore few basic classifiers. As we do so we see different ways of determining the performance of the the model as well as basic notions of loss functions, optimization, and iterative training.

1.4.1 An AdHoc classifier for 1 digits

Deep learning and other general machine learning methods in this chapter are general and work well on a variety of datasets. Such generality is useful as it relieves one from having to custom fit the machine learning model to the exact nature of the problem at hand. Nevertheless as a vehicle for illustration of the aforementioned concepts of binary classification, we now present a model that is only suited for a specific problem. Note that even for the specific problem. This is for illustrative purposes.

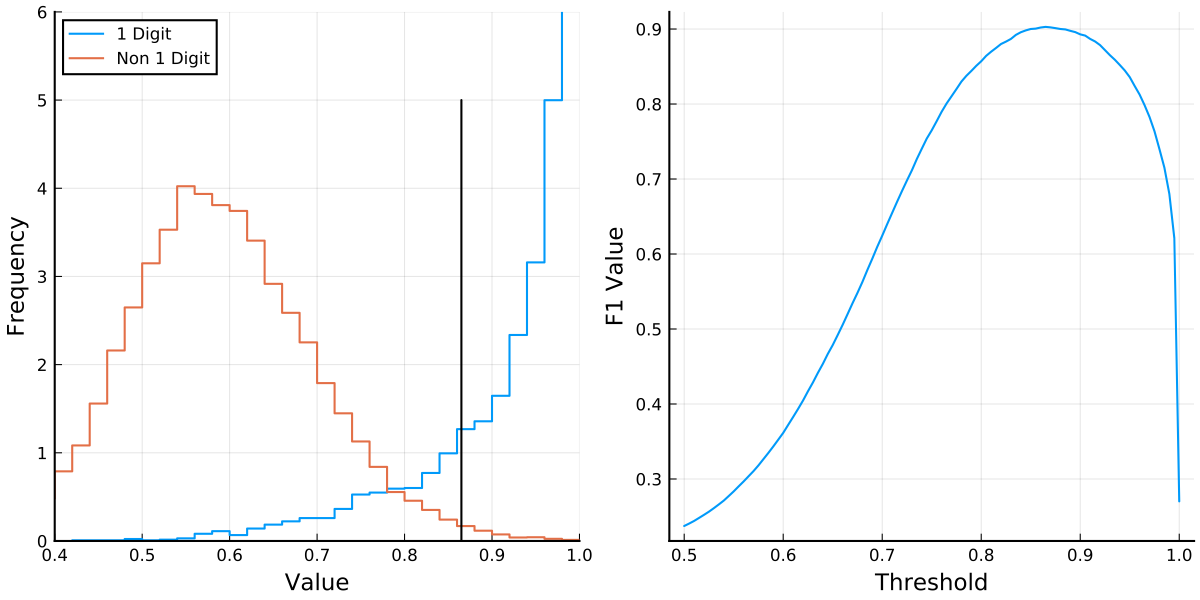

With the MNIST data we only wish to classify if an image is the digit \(1\) or not. How would we do that? Here we go for a direct method related to the way in which digits are drawn. We rely on the fact that \(1\) digits roughly appear as a vertical line while other digits have more lit up pixels not just on a vertical line within the image. This is clearly only a rough description because the character \(1\) involves more than just a vertical line.

With this observation, our classifier works by considering each of the \(28\) rows of the image and locating the maximal (brightest) pixel out of the \(28\) pixels in that row. We reason that if the image is a \(1\) digit, then on each row, there will generally only be a few neighboring bright pixels to the maximal pixel. However, if it is not a \(1\) digit, there may potentially be other bright pixels on the row. For this we include two pixels to the left of the brightest pixels and two pixels to the right as long as we don’t overshoot the boundary. We then sum up these \(5\) pixels and do so for every row. Finally we divide this total by the total sum of the pixels and call this function the peak proportion and represent it via, \[ \chi(x) = \sum_{i=1}^n \sum_{k=-2}^2 x_{i,m(x,i) + k}, \quad \text{where} \quad m(x,i) = argmax_{j=1,\ldots,28} x_{i,j}. \]

We may expect that for images representing a \(1\) digit, the peak proportion is high (close to unity), while for other images it isn’t. This is indeed the case as presented on the left of the following figure. The plot presents the distribution of the peak proportion value when considering \(60,000\) images in the MNIST train set. The (Julia) source that creates this Figure is here

See the source code here.

We thus see that \(\chi(\cdot)\) is a sensible statistic to consider because it allows to seperate positive samples from negative samples. Note that such separation would be useless when trying to compare other digits, as it is tailor made for the digit \(1\).

We thus see that \(\chi(\cdot)\) is a sensible statistic to consider because it allows to seperate positive samples from negative samples. Note that such separation would be useless when trying to compare other digits, as it is tailor made for the digit \(1\).

A question is now how to choose a threshold parameter, \(\theta\) where the classifier is

\[ \hat{f}_\theta(x) = \begin{cases} -1 & \chi(x) \le \theta,\\ +1 & \chi(x) > \theta. \end{cases} \]

In this case \(\theta\) is the parameter and in the training process we optimize the parameter \(\theta\). Clearly if \(\theta\) is very low (near 0) almost every digit would be classified as a 1 digit, whereas if \(\theta\) is high (near 1) almost every digit would be classified as a negative (or a 0 digit).

The question is now how to determine the “best value” of \(\theta\) and prior to that to define a suitable performance measure under which “best value”.

1.4.2 Accuracy

When considering a classifier \(\hat{f}: {\mathbf R}^d \to \{1,\ldots,K\}\), the most basic way to quantify the performance is via the accuracy. Given feature data \(x^{(1)},\ldots,x^{(m)}\) and labels \(y_1,\ldots,y_m\), which is either the training set, validation set, or test set, the accuracy is the proportion of labels that are correctly predicted. Namely, \[ \text{accuracy} = \frac{1}{m}\sum_{i=1}^m \mathbf{1}\{\hat{f}(x^{(i)} ) = y_i \}. \]

In many cases this is a very sensible measure. However when dealing with unbalanced data (where some labels are much more common than others), considering accuracy on its own is not enough.

For example, if we return to the goal of classifying if an MNIST digit is \(1\) (positive) or not (negative), then out of the test set of \(60,000\) images there are \(6,742\) positive samples and the remaining samples are negative. Hence a degenerate classifier such as \(\hat{f}(x) \equiv -1\) would achieve a accuracy of almost \(90\%\). This might sound good, but it is clearly not a valid measure of the quality of estimator in such a case.

1.4.3 Precision and Recall

It is common to use the pair of performance measures, precision and recall which we describe below.

Comparing with classical statistics, observe that the recall is essentially equivalent to the power of a statistical test as it quantifies the chance of detecting a positive in case the label is actually positive. This is also called sensitivity in biostatistics. However the precision does not agree with the specificity from biostatistics (or \(1-\alpha\) as denoted in statistical tests). Nevertheless, a high precision implies a low rate of false positives similarly to the fact that in statistical testing, a high \(1-\alpha\) value (or specificity) also means a low number of false positives.

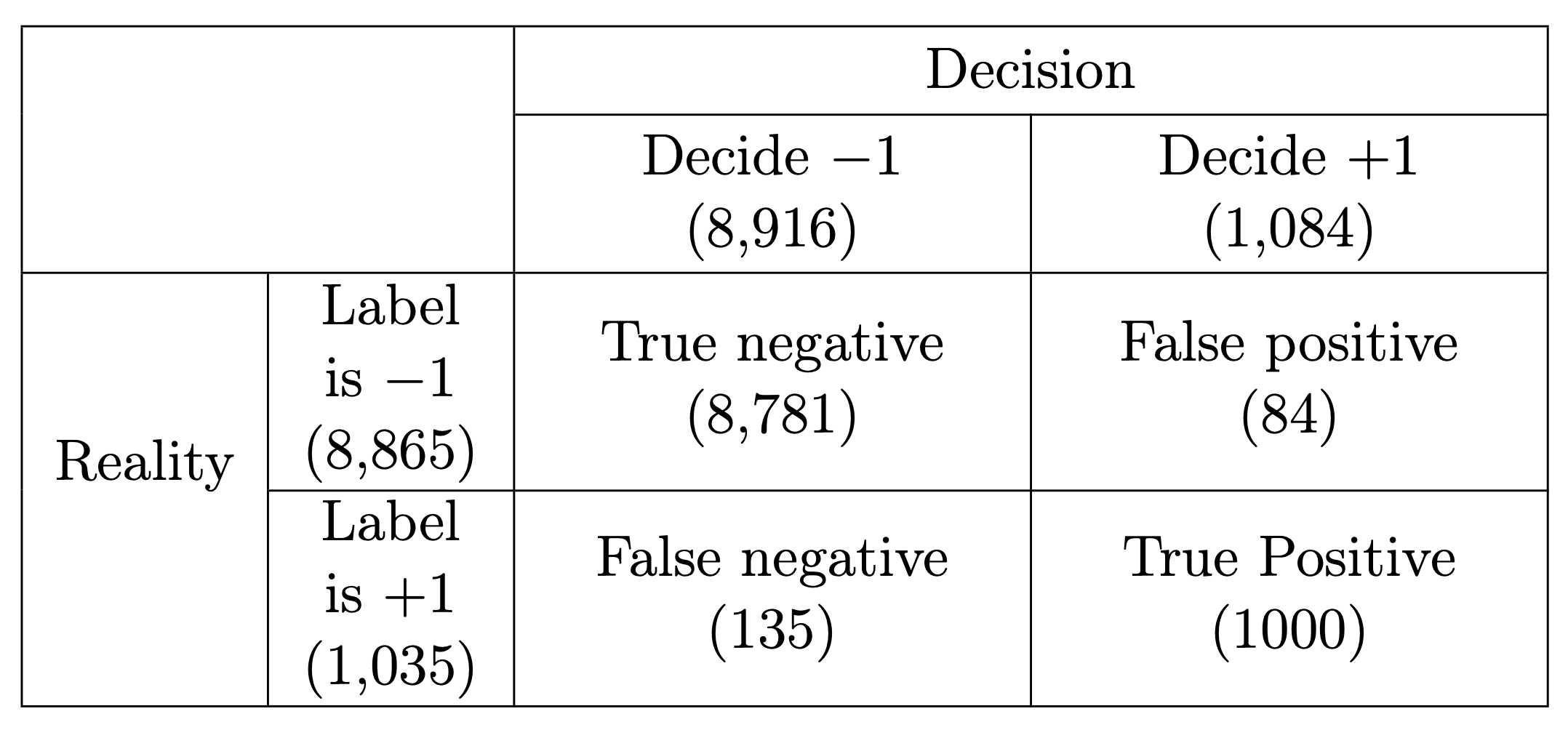

It is clear that ideally we wish the classifier to have as few false positives and false negatives as possible, but these are often competing objectives. Precision and recall help to quantify these objectives and are computed as follows where \(|\cdot|\) stands for the number of elements in a set. \[ \text{Precision} = \frac{\big|\text{true positive}\big|}{\big|\text{true positive}\big| + \big|\text{false positive}\big|}, \]

\[ \text{Recall} = \frac{\big|\text{true positive}\big|}{\big|\text{true positive}\big| + \big|\text{false negative}\big|}. \] In our case: \[ \text{Precision} = \frac{1000}{1000+84} = 92.25\%, \qquad \qquad \text{Recall} = \frac{1000}{1000+135} = 88.11\%. \]

The closer we are to \(100\%\), the better.

In designing classifiers, precision and recall often yield competing objectives where we can often tune parameters and improve one of the measures at the expense of the other. In the context of (classical) statistical testing, things are more prescribed since we fix \(\alpha\), the probability of Type I error, at an accepted level (e.g. \(0.05\)), and then search for a test that minimizes \(\beta\), the probability of Type II error (this is maximization of power, sensitivity, or recall). However in machine learning, there is more freedom. We don’t necessarily discriminate between the cost of false positives and false negatives, and even if we do, it can be by considering the application at hand.

1.4.4 Averaging Precision and Recall: The \(F_1\) score

A popular way to average precision and recall. is by considering their harmonic mean of the two. This is called the F\(_1\) score and is computed as follows: \[ F_1 = \frac{2}{\frac{1}{\text{Precision}} + \frac{1}{\text{Recall}}} = 2 \frac{\text{Precision} \times \text{Recall}}{\text{Precision} + \text{Recall}}. \] In our example, \(F_1 = 90.13\%\). Here is a blog post descrbing the arithmetic, geometric, and harmonic means. While we don’t cover it further, we note that sometimes you can use a generalization of \(F_1\) called the \(F_\beta\) where \(\beta\) determines how much more important is recall in comparison to precision (do not confuse with \(\beta\) of hypothesis testing). However in general, if there is not a clear reason to price false positives and false negatives differently, using the \(F_1\) score as a single measure of performance is sensible.

We do this by plotting the \(F_1\) score as a function of \(\theta\) and choosing the optimal \(\theta\) which turns out to be \(0.865\). This then yields the classifier.

1.4.5 Linear classifiers

The previous classifier may be somewhat useful for determining if a digit is 1 or not, however the idea isn’t easily extended to other digits or other types of data. For this machine learning seeks to find generic algorithms that do not directly rely on the nature of the data at hand. One of the simplest such algorithms is simply the linear classifier which uses least squares.

We consider each image as a vector and obtain different least squares estimators for each type of digit using a one vs. rest approach. For digit \(\ell \in \{0,1,2,\ldots,9\}\) we collect all the training data vectors, with \(y_i = \ell\). Then for each such \(i\), we set \(y_i = +1\) and for all other \(i\) with \(y_i \neq \ell\), we set \(y_i = -1\). This labels our data as classifying “yes digit \(\ell\)” vs. “not digit \(\ell\)”. Call this vector of \(-1\) and \(+1\) values \(y^{(\ell)}\) for every digit \(\ell\). We compute, \[\begin{equation} \label{eq:regTime} \beta^{(\ell)} = A^\dagger y^{(\ell)} \qquad \mbox{for} \qquad \ell =0,1,2,\ldots,9, \end{equation}\] where \(A^\dagger\) is the \(785 \times 60,000\) dimensional pseudo-inverse associated with the \(60,000\) (training) images. It is the pseudo-inverse of the \(60,000 \times 785\) matrix \(A\) (allowing also a first column of \(1\)’s for a bias term). The matrix \(A\) is constructed with a first column of \(1\)’s, the second column having the first pixel of each image, the third column having the second pixel of each image, up to the last column having the \(784\)’th pixel of each image. The pixel order is not really important and can be row major, column major, or any other order, as long as it is consistent.

Now for every image \(i\), the inner product of \(\beta^{(\ell)}\) with the image (augmented with a \(1\) for the constant term) yields an estimate of how likely this image is for the digit \(\ell\). A very high value indicates a high likelihood and a low value is a low likelihood. We then classify an arbitrary image \(\tilde{x}\) by selecting, \[ \hat{y}(\tilde{x}) = \text{argmax}_{\ell = 0,\ldots,9} ~~ \beta^{(\ell)} \cdot \left[ \begin{matrix} 1 \\ \tilde{x} \end{matrix} \right]. \]

Observe that during training, this classifier only requires calculating the pseudo-inverse of \(A\) once as it is the for all digits.

It then only needs to remember \(10\) vectors of length \(785\), \(\beta^{(0)},\ldots,\beta^{(9)}\). Then based on these \(10\) vectors, a decision rule is very simple to execute.

This example is taken from here

using Flux, Flux.Data.MNIST, LinearAlgebra

using Flux: onehotbatch

imgs = Flux.Data.MNIST.images()

labels = Flux.Data.MNIST.labels()

nTrain = length(imgs)

trainData = vcat([hcat(float.(imgs[i])...) for i in 1:nTrain]...)

trainLabels = labels[1:nTrain]

testImgs = Flux.Data.MNIST.images(:test)

testLabels = Flux.Data.MNIST.labels(:test)

nTest = length(testImgs)

testData = vcat([hcat(float.(testImgs[i])...) for i in 1:nTest]...)

A = [ones(nTrain) trainData]

Adag = pinv(A)

tfPM(x) = x ? +1 : -1

yDat(k) = tfPM.(onehotbatch(trainLabels,0:9)'[:,k+1])

bets = [Adag*yDat(k) for k in 0:9]

classify(input) = findmax([([1 ; input])'*bets[k] for k in 1:10])[2]-1

predictions = [classify(testData[k,:]) for k in 1:nTest]

confusionMatrix = [sum((predictions .== i) .& (testLabels .== j))

for i in 0:9, j in 0:9]

accuracy = sum(diag(confusionMatrix))/nTest

println("Accuracy: ", accuracy, "\nConfusion Matrix:")

show(stdout, "text/plain", confusionMatrix)Accuracy: 0.8603

Confusion Matrix:

944 0 18 4 0 23 18 5 14 15

0 1107 54 17 22 18 10 40 46 11

1 2 813 23 6 3 9 16 11 2

2 2 26 880 1 72 0 6 30 17

2 3 15 5 881 24 22 26 27 80

7 1 0 17 5 659 17 0 40 1

14 5 42 9 10 23 875 1 15 1

2 1 22 21 2 14 0 884 12 77

7 14 37 22 11 39 7 0 759 4

1 0 5 12 44 17 0 50 20 8011.4.6 Beyond linear classifiers

In many ways, the neural networks that we study are a combination of multiple linear classifiers. Here is a very crude example where we seperate the input space by a single hyper-plane and train two alternative linear classifiers combined into a single classifier. The question is how to find the hyperplane. As a warm up for this unit we just do a simple (and inefficient) random search…

using Flux, Flux.Data.MNIST, LinearAlgebra, Random, Distributions

using Flux: onehotbatch

imgs = Flux.Data.MNIST.images()

labels = Flux.Data.MNIST.labels()

nTrain = length(imgs)

trainData = vcat([hcat(float.(imgs[i])...) for i in 1:nTrain]...)

trainLabels = labels[1:nTrain]

testImgs = Flux.Data.MNIST.images(:test)

testLabels = Flux.Data.MNIST.labels(:test)

nTest = length(testImgs)

testData = vcat([hcat(float.(testImgs[i])...) for i in 1:nTest]...)

Random.seed!(0)

wS = rand(784) .- 0.5

bS = 0.0

bestAcc = 0.0

α₀, α₁ = 2, 0.1

for epoch in 1:5

@show epoch

rn = rand(Normal(),784)

w = wS + α₁*rn/norm(rn)

b = bS + α₀*rand(Normal())

trainDataPos = trainData[(trainData*w .+ b) .>= 0,:]

trainLabelsPos = trainLabels[(trainData*w .+ b) .>= 0]

nPos = length(trainLabelsPos)

trainDataNeg = trainData[(trainData*w .+ b) .< 0,:]

trainLabelsNeg = trainLabels[(trainData*w .+ b) .< 0]

nNeg = length(trainLabelsNeg)

@show nPos,nNeg

Ap = [ones(nPos) trainDataPos]

AdagPos = pinv(Ap)

An = [ones(nNeg) trainDataNeg]

AdagNeg = pinv(An)

tfPM(x) = x ? +1 : -1

yDatPos(k) = tfPM.(onehotbatch(trainLabelsPos,0:9)'[:,k+1])

yDatNeg(k) = tfPM.(onehotbatch(trainLabelsNeg,0:9)'[:,k+1])

betsPos = [AdagPos*yDatPos(k) for k in 0:9]

betsNeg = [AdagNeg*yDatNeg(k) for k in 0:9]

classify(input) = findmax([

(input'w+b >= 0 ?

([1 ; input])'*betsPos[k]

:

([1 ; input])'*betsNeg[k])

for k in 1:10])[2]-1

predictions = [classify(testData[k,:]) for k in 1:nTest]

accuracy = sum(predictions .== testLabels)/nTest

@show accuracy

if accuracy > bestAcc

bestAcc = accuracy

wS, bs = w, b

println("Found improvement")

else

println("No improvement in this step")

end

println()

end

println("\nFinal accuracy: ", bestAcc)Observe that with such a classifier and only a short random search we are able to improve the test accuracy from 0.8603 to 0.8709.

epoch = 1

(nPos, nNeg) = (36101, 23899)

accuracy = 0.868

Found improvement

epoch = 2

(nPos, nNeg) = (31267, 28733)

accuracy = 0.8687

Found improvement

epoch = 3

(nPos, nNeg) = (43458, 16542)

accuracy = 0.8616

No improvement in this step

epoch = 4

(nPos, nNeg) = (27326, 32674)

accuracy = 0.8709

Found improvement

epoch = 5

(nPos, nNeg) = (26633, 33367)

accuracy = 0.8698

No improvement in this step

Final accuracy: 0.87091.5 Classicial considerations of overfitting

When trying to fit a model there are two main objectives: (1) fitting the training data well. (2) fitting unknown data well. These objectives often compete because a very tight fit to the data can be achieved by overfitting a model and then for unseen data the model does not perform well.

We now discuss how these objectives are quantified via model bias, model variance, and the bias–variance tradeoff. We also present practical regularization techniques for optimizing the bias variance tradeoff.

The key is to consider the data \(\{(x_i,y_i)\}_{i=1}^n\) as a random sample, where each pair \((x_i,y_i)\) is independent of all other data pairs. We use capital letters for the random variables representing these data points. Hence \(X\) is a random feature vector and \(Y\) is the associated random label. Note that clearly \(X\) and \(Y\) themselves should not be independent because then predicting \(Y\) based on \(X\) would not be possible.

The underlying assumption is that there exists some unknown relationship between \(x_i\) and \(y_i\) of the form \(y_i = f(x_i) + \text{noise}\). Then the inherent noise level can be represented via \({\mathbb E} [\big(Y - f(X)\big)^2]\). Now when presented with a classification or regression algorithm, \(\hat{f}(\cdot)\), we consider the expected loss, \[ {\mathbb E}[ L] = {\mathbb E} \Big[ \big(\tilde{Y}-\hat{f}(\tilde{X}\, ; \, D)\big)^{2}\Big]. \]

Here we use \(D\) to represent the random dataset used to train \(\hat{f}(\cdot)\) and the individual pair \((\tilde{X},\tilde{Y})\) is some fixed point from the unseen (future) data. This makes \(\hat{f}(\cdot \, ; \, D)\) a random model with randomness generated from \(D\) (as well as possible randomness from the training algorithm). The expectation in is also with respect to the random unseen pair \((\tilde{X},\tilde{Y})\).

With these assumptions, a sum of squares decomposition can be computed and yields the bias-variance-noise decomposition equation, \[ {\mathbb E}[L]=\underbrace{\left({\mathbb E}[\hat{f}(\tilde{X}\, ; \, D)]-{\mathbb E}[f(\tilde{X})]\right)^{2}}_{\text{Bias squared of~} \hat{f}(\cdot)} +\underbrace{\text{Var} \big(\hat{f}(\tilde{X}\, ; \, D)\big)}_{\text{Variance of}~\hat{f}(\cdot)} + \underbrace{{\mathbb E}[\big(Y - f(X)\big)^2]}_{\text{Noise of~}(X,Y)}. \]

The main takeaway from is that if we ignore the inherent noise, the loss of the model has two key components, bias, and variance. The bias is a measure of how the model \(\hat{f}(\cdot)\) misclassifies the correct relationship \({f}(\cdot)\). That is in models with high bias, \(\hat{f}(\cdot)\) does not accurately describe \(f(\cdot)\). That is high bias generally implies under-fitting. Similarly, models with low bias are detailed descriptions of reality.

The variance is a measure of the variability of the model \(\hat{f}(\cdot\, ; \, D)\) with respect to the random sample \(D\). Models with high variance are often overfit (to the training data) and do not generalize (to unseen data) well. Similarly, models with low variance are much more robust to the training data and generalize to the unseen data much better.

Understanding these concepts, even if qualitatively, allows the machine learning practitioner to tune the models to the data. The perils of high bias (under-fitting) and high variance (overfitting) can sometimes be quantified and even tuned via tuning parameters as we present in the example below. Similar analysis to the derivation that leads to can also be attempted for other loss functions. However, the results are generally less elegant. Nevertheless, the concepts of model bias, model variance, and the bias variance tradeoff still persist. For example in a classification setting you may compare the accuracy obtained on the training set to that obtained on a validation set. If there is a high discrepancy where the train accuracy is much higher than the validation accuracy, then there is probably a variance problem indicating that the model is overfitting.

There are several approaches for controlling model bias, model variance and optimizing the bias-variance tradeoff. One key approach is called regularization. In regression models, it is common to add regularization terms to the loss function. In deep learning, the simple randomized technique of dropout has become popular (this is covered in Unit 4).

The hyper-parameter tuning process which often (implicitly) deals with the bias-variance tradeoff can be carried out in multiple ways. One notable way which we explore here is \(k\)-fold cross validation. Here the idea is to break up the training set into \(k\) groups. Then use \(k-1\) of the groups for training and \(1\) group for validation. However this is repeated \(k\) times so that each of the \(k\) groups gets to be a validation set once. Then the results over all \(k\) training sessions are averaged. This process can then be carried out over multiple values of the hyper-parameter in question and the loss can be plotted or analyzed. In fact, one may even estimate the bias and the variance terms in this way but we do not do so here. Still, we use \(k\)-fold cross validation in the ridge regression example that follows.

1.5.1 Addition of Regularization Terms

The addition of a regularization term to the loss function is the most classic and common regularization technique. Denote now the parameters of the model as \(\beta\). The main idea is to take the data fitting objective, \[\begin{equation} \label{eq:Nonregularization} \min_{\beta} L(\text{data},\beta), \end{equation}\] and augment the loss function \(L(\cdot,\cdot)\) with an additional regularization term \(R(\lambda,\beta)\) that depends on a regularization parameter \(\lambda\) and the estimated parameters \(\beta\). The optimization problem then becomes, \[\begin{equation} \label{eq:regularization} \min_{\beta} ~L(\text{data},\beta) + R(\lambda,\beta). \end{equation}\]

Now \(\lambda\), often a scalar in the range \([0,\infty)\) but also sometimes a vector, is a hyper-parameter that allows us to optimize the bias-variance tradeoff. A common general regularization technique is elastic net where \(\lambda = [\lambda_1 ~ \lambda_2]^T\) and, \[ R(\lambda,\beta) = \lambda_1 ||\beta||_1 + \lambda_2 ||\beta||^2. \]

Here \(||\beta||^2 = \sum_{i=1}^{p+1} \beta_i^2\) and \(||\beta||_1 = \sum_{i=1}^{p+1} | \beta_i |\) when \(p+1\) is the dimension of the parameter space. Hence the values of \(\lambda_1\) and \(\lambda_2\) determine what kind of penalty the objective function will pay for high values of \(\beta_i\). Clearly with \(\lambda_1, \lambda_2 = 0\) the original objective isn’t changed. Further as \(\lambda_1, \lambda_2 \to \infty\) the estimates \(\beta_i \to 0\) and the data is fully ignored. As \(\lambda_1\) or \(\lambda_2\) grows the bias in the model grows, however the variance is decreased as overfitting is mitigated. The virtue of regularization is that there is often a magical `sweet spot’ for \(\lambda\) where the objective does a much better job than the non-regularized .

Particular cases of elastic net are the classic ridge regression (also called Tikhonov regularization) and LASSO standing for least absolute shrinkage and selection operator. In the former \(\lambda_1 = 0\) and only \(\lambda_2\) is used, and in the latter \(\lambda_2 = 0\) and only \(\lambda_1\) is used. One of the virtues of LASSO (also present in the more general elastic net case) is that the \(||\beta||_1\) cost allows the algorithm to knock out variables by ``zeroing out’’ their \(\beta_i\) values. Hence LASSO is very useful as an advanced model selection technique.

The case of ridge regression is slightly simpler to analyze than LASSO or the general elastic net. In this case the data fitting problem can be represented as, \[ \min_{\beta \in \mathbb{R}^{p+1}} || A \beta - y ||^2 + \lambda ||\beta||^2, \] where we now consider \(\lambda\) as a scalar (previously \(\lambda_2\)) in the range \([0,\infty)\). Note also that here \(y\) denotes a vector of all the labels \(\{y_i\}_{i=1}^n\). The squared norm, \(||\cdot||^2\) of a vector is just its inner product with itself and \(A\) is the \(n \times (p+1)\) design matrix comprised of \(x\) values and the first column is a column of ones. The problem can just be recast as \[ \min_{\beta \in \mathbb{R}^{p+1}} \left| \left| \left[ \begin{array}{c} A \\ \sqrt{\lambda} I \end{array} \right] \beta - \left[ \begin{array}{c} y \\ 0 \end{array} \right] \right| \right|^2. \] The pseudo-inverse associated with the augmented matrix, \[ \tilde{A} = \left[ \begin{array}{c} A \\ \sqrt{\lambda} I \end{array} \right], \] is \(\tilde{A}^{\dagger} = (A^TA + \lambda I)^{-1}A^T\). Hence the parameter estimate is \(\hat{\beta} = (A^TA + \lambda I)^{-1}A^T y\).

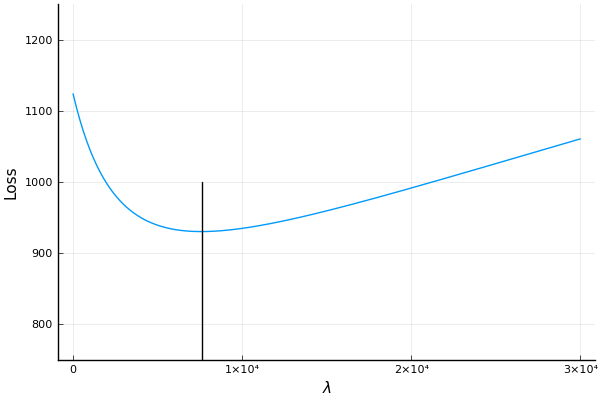

See the source code here. Here is an example of ridge regression where we carry out \(k\)-fold cross validation to find a good \(\lambda\) value. Here we use the , for from . A search over a range of \(\lambda\) values is presented in the figure where we see that the optimal \(\lambda\) is at around \(\lambda = 7,600\).

Searching for the best \(\lambda\) value using \(k\)-fold cross validation.

Page built: 2021-03-04 using R version 4.0.3 (2020-10-10)